Default-utilitarianism in public discourse and its discontents.

A polemic against "default-utilitarianism" — the unreflective folk consequentialism that saturates Anglophone public reasoning, mistakes its borrowed scientific authority for rigor, and has impoverished moral discourse at the cost of dignity, rights, and character.

A disturbing trend I have noticed in public discourse is what I will call "default-utilitarianism" or the notion that by default, people (especially those in Anglophone countries) will default to some form of consequentialism even in the absence of formal ethical education, making it the background radiation against which public moral discourse lies.

This essay is not a critique of Bentham, Mill, Sidgwick, Parfit, or Singer. The utilitarian philosophical tradition is a serious one, and it has generated serious responses to the serious objections that have been leveled against it for two centuries. Sophisticated utilitarians are aware of the utility monster. They have grappled with the repugnant conclusion. They know about Nozick's experience machine. The target of what follows is something humbler and more pervasive than academic moral philosophy, to-wit: a folk ethical posture that saturates Anglophone public reasoning without being properly held or consciously adopted by anyone. Thus, default-utilitarianism: the unreflective assumption that if a policy, action, or institution can be shown to produce net benefit for a sufficient number of people, no further moral analysis is required. It is the folk belief, rarely stated so nakedly, that ends justify means when the ends are desirable enough, dressed in the borrowed authority of empirical science and presented as the obvious starting point for any sensible, modern person thinking about ethics.

What makes this posture worth attacking is not simply that it is philosophically mistaken, though it is. It is that it presents itself as the alternative to philosophical confusion: as the rational, scientific, grown-up position that replaces the moralistic hand-wringing of less rigorous approaches. And it is this pretense, more than anything else, that has done damage: not by imposing a coherent utilitarian calculus on public life, but by providing an infinitely flexible rhetorical resource with which almost any policy can be justified, almost any right can be overridden, and almost any objection can be dismissed as sentiment obstructing the greater good.

Scientism and Pretense

The epistemic backdrop against which we lay our scene.

I propose that the reason folk consequentialism becomes the default meta-ethical theory in these circles is that it "feels" scientific to the unreflective mind, and like utilitarianism as default meta-ethical theory, ontological and methodological naturalism is often adopted by default in a technocratic society where scientific language games are privileged over others for their compulsive nature.

The first thing to understand about default-utilitarianism is why it feels scientific. The answer is that it mimics the structure of empirical reasoning: there are inputs (harms, benefits, preferences, welfare states), there are outputs (net utility, aggregate wellbeing), and there is a procedure (calculate, optimize, choose the action that maximizes the output). This looks like what scientists do. It feels rigorous in a way that talking about rights, duties, character, or dignity does not, because those concepts resist quantification while welfare—or so it seems—can at least in principle be measured.

This feeling is an illusion, and the philosopher who first exposed its mechanism with the greatest precision was G.E. Moore, in Principia Ethica (1903). Moore's open question argument is straightforward and devastating. Suppose you define "good" in terms of some natural property, e.g., pleasure, say, or preference satisfaction, or welfare. Now ask: is pleasure good? Is preference satisfaction good? Is welfare good? These questions feel open. They do not feel like asking whether a bachelor is unmarried, which is analytic when one understands the concepts at issue. No matter how carefully you specify the natural property you want to identify with goodness, you can always intelligibly ask whether that property is in fact good, and the question will not feel trivial. Moore took this as evidence that goodness is not identical to any natural property, that any attempt to define the good in terms of facts about the world commits what he called the naturalistic fallacy.

The implication for folk consequentialism is immediate. The entire apparatus of welfare aggregation and outcome optimization is built on a foundational normative axiom: that what we ought to maximize is aggregate welfare, or utility, or preference satisfaction, in whichever currency the theory adopts. This axiom is not a scientific finding. It is not derived from empirical observation. It is a substantive moral commitment, and it is no more grounded in natural fact than the deontologist's claim that persons have inviolable rights or the virtue ethicist's claim that we ought to cultivate practical wisdom. The folk consequentialist who dismisses these alternatives as unscientific while defending their own view as the rational, evidence-based approach has not noticed that their foundational commitment is just as metaphysically loaded as the alternatives. It is simply less visible to them because it is assumed rather than argued.

This is, to be precise, not merely a philosophical error. It is a form of self-deception with practical consequences, because it means that the folk consequentialist is not actually reasoning from evidence to moral conclusions. They are choosing moral conclusions on grounds that are ultimately no different from anyone else's, and then presenting those conclusions as if they had been derived by a process that others who disagree have simply failed to perform correctly. The lab coat is borrowed. The metaphysics is smuggled, a hitchhiker that uses semantic and syntactic similarity to infiltrate the world of ideas.

But Moore, for all his acuity, diagnoses only the logical pathology. To understand why this pathology is so deeply rooted in Anglophone culture, or why the naturalistic fallacy feels not like a fallacy but like common sense, we must adopt a wider frame. We find it in Edmund Husserl's late masterwork, The Crisis of the European Sciences and Transcendental Phenomenology, written in the 1930s as Husserl watched the civilization he had spent his life analyzing descend into catastrophe.

Husserl's argument begins with Galileo, whom he calls, in a famous phrase, a "discovering and concealing genius." What Galileo discovered—or rather, what the mathematization of nature that Galileo inaugurated made possible—was the extraordinary power of treating nature as a formal mathematical structure, replacing the qualitative richness of sensory experience with quantitative relationships between abstract entities. The physical world, on this picture, just is the world as mathematical physics describes it: a system of masses, forces, and fields, fully characterizable in the language of equations. This abstraction proved enormously productive. It generated modern science, modern technology, and ultimately the material conditions of modern life.

What Galileo concealed, or what the success of mathematical physics caused European civilization to forget, was that this formal, quantified world is an abstraction built upon what Husserl calls the Lebenswelt, the life-world: the pre-theoretical, lived, experience-laden world in which human beings actually exist. The life-world is irreducibly qualitative. It is the world of colors and sounds and smells, of meanings and obligations, of relationships and loyalties and dignities. Mathematical physics does not describe this world; it deliberately brackets (epoché) it in favor of a formal model. The crisis Husserl identifies is that European thought, dazzled by the success of the formal model, came to treat it as more real than the life-world it was built upon. The abstraction was mistaken for the reality. The map was taken for the territory. And since the life-world—the domain of meaning, value, and lived experience—could not be accommodated in the formal model, it came to be seen as philosophically suspect: as mere subjectivity, mere sentiment, mere appearances behind which the real mathematical structure lay hidden.

The consequence for ethics is exactly the pathology this essay is diagnosing. The life-world is, among other things, a moral world. We inhabit a domain saturated with value: we experience obligations, we recognize dignities, we feel the weight of injustice, we know without calculation that some things are wrong. These are not epiphenomena awaiting reduction to natural fact. They are the primary data of moral experience. When folk consequentialism reaches for the authority of mathematical science to answer ethical questions, when it insists that the proper procedure for moral reasoning is to quantify, aggregate, and optimize, it commits precisely the error Husserl identifies: it treats the formal abstraction (utility calculus) as more morally real than the lived experience of moral agency (the sense that this person's dignity may not be violated, that this promise creates an obligation, that this act is simply wrong regardless of the numbers). The concepts that cannot be captured in the welfare calculus (dignity, deserts, integrity, rights) are demoted to mere sentiment, obstacles to clear thinking rather than the very substance of what moral thinking is about. Husserl's crisis of the sciences becomes, in miniature, a crisis of ethics: the formalism meant to model moral experience is mistaken for the experience itself, and the life-world of genuine moral agency is dismissed as unscientific.

The Historical Problematique

Or, how we got to where we are.

To understand why this has happened in Anglophone culture specifically, you need to trace two intersecting intellectual genealogies.

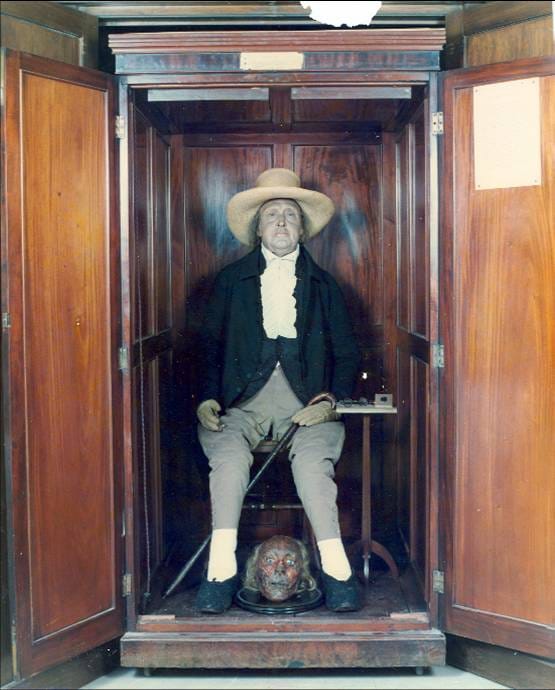

The first is the obvious one: the British empiricist tradition running from Locke through Hume to Bentham, Mill, and Sidgwick. Bentham's hedonic calculus, Mill's refinement of it, and Sidgwick's systematic attempt in The Methods of Ethics to put utilitarian reasoning on a rigorous philosophical footing established utilitarianism as the characteristic ethical theory of the English-speaking world. The association is so deep that in much of Anglophone academic philosophy, "ethics" still means primarily either utilitarianism or whatever theory you adopt to avoid the problems of utilitarianism.

But the second genealogy is at least as important, and less often recognized: the distinctly American tradition of philosophical pragmatism. Dewey's instrumentalism (the view that beliefs and practices are to be evaluated by their consequences for human experience, that "true" means "useful," and that thought is fundamentally a tool for solving problems) is not Benthamite in any direct sense. Dewey was not running utility calculations. But his philosophy created a native American intellectual idiom, independent of the British utilitarian tradition, in which consequentialist reasoning feels like plain good sense rather than a contestable theoretical commitment. If the test of any idea is what it does, if the criterion of any practice is how well it solves the problems it was adopted to solve, then the instinct to ask "what are the outcomes?" and to judge by outcomes becomes almost automatic. Pragmatism did not teach Americans to be utilitarians; it taught them to think in a way that made folk consequentialism feel like common sense, so that alternatives to it feel like mysticism or special pleading.

Underlying both traditions is what might be called the background assumption of educated Anglophone thought: ontological naturalism, the view that natural science describes all of reality and that anything not amenable to scientific description is either reducible to natural facts or not fully real. This assumption is rarely stated explicitly and almost never argued for; it functions as a default, a tacit framework within which more specific debates take place. For ethics, its effect is to create an environment in which theories that can at least gesture toward empirical grounding—that can claim to measure something, quantify something, optimize something—enjoy an enormous presumption of legitimacy that theories making irreducibly normative claims do not. Consequentialism can claim to be doing something like empirical work. Deontology, with its categorical imperatives and inviolable rights, cannot. Virtue ethics, with its focus on character and practical wisdom, cannot. The naturalist background assumption does not establish the truth of consequentialism, but it tilts the cultural playing field dramatically in its favor, so that it becomes the default, or the position you hold unless you've done the philosophical work to convince yourself otherwise.

The center cannot hold.

What the folk-consequentialist calculus cannot survive.

The reductios against folk consequentialism are not secret. They are familiar to anyone who has spent time in a philosophy classroom. That they have not dented the folk theory's cultural dominance is itself a datum worth noting: what is at stake is not a theory people have thought through and endorsed, but a posture they have never subjected to the kind of scrutiny that would make them vulnerable to objections.

Still, the objections are worth stating plainly, because when the folk consequentialist encounters them fresh, they reliably generate something more like recognition than surprise, the recognition that the theory's conclusions, when made explicit, are things that everyone already knew were monstrous.

Consider Robert Nozick's experience machine, from Anarchy, State, and Utopia (1974). If what matters morally is welfare—if the good is constituted by subjective states of wellbeing, pleasure, preference satisfaction—then you should have very strong reason to plug yourself into a machine that delivers a lifetime of simulated perfect happiness. The machine will give you more welfare than you could plausibly achieve in actual life. A committed welfarist should prefer the machine to reality. Almost no one would choose this. Our refusal is not irrational; it reflects a genuine insight: we care about things that welfare theories cannot accommodate. We care about authentically achieving things, not merely having the experience of achieving them. We care about our relationships being real, not simulated. We care, in some deep sense, about actually being in contact with the world rather than floating in a tank of pleasant illusions. The experience machine demonstrates that pure welfarism fails as an account of what we value, because it cannot explain why almost everyone, on reflection, would decline an offer that maximizes their welfare.

Derek Parfit's repugnant conclusion is more technical but no less devastating for policy applications. Consistent population-level consequentialism, Parfit showed, leads to the result that a world containing a vast number of people whose lives are barely worth living is better than a world containing a much smaller number of people living lives of genuine flourishing, as long as the aggregate welfare of the larger world is greater. This is not a reductio of Parfit, who recognized it as a profound and perhaps insoluble problem: his life's work was in large part an attempt to navigate it. It is a reductio of anyone who invokes aggregate welfare reasoning in policy argument without having reckoned with this consequence. Policies affecting population, public health, immigration, resource distribution all involve the kind of aggregate reasoning that the repugnant conclusion reveals as internally pathological. The folk consequentialist reaching for welfare aggregation to justify these policies has almost certainly not asked whether the logic they are deploying commits them to preferring a planet of barely-surviving billions to a civilization of flourishing millions.

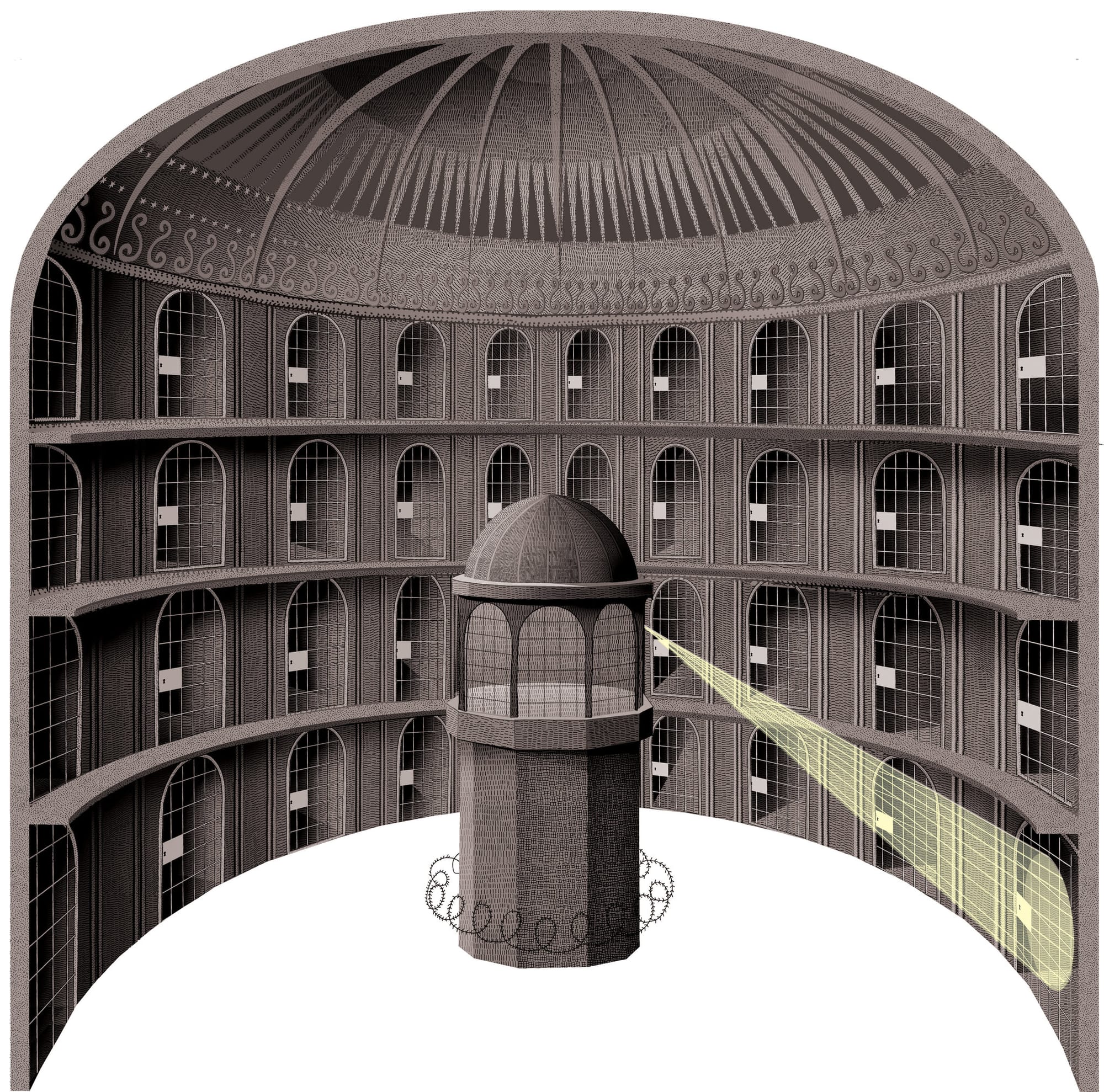

John Rawls, in A Theory of Justice, identifies what is perhaps the deepest structural problem: utilitarianism does not take seriously the separateness of persons. It treats society as if it were a single individual whose aggregate satisfactions can be summed and traded off, maximizing the total as you would maximize an individual's welfare over time. But persons are distinct. No one experiences the aggregate. When one person's fundamental interests are sacrificed for the greater benefit of many, that person is not compensated by the aggregate gain they do not share in. The logic of sacrifice that utilitarianism licenses (the one for the many, the individual for the collective, the minority for the majority) fails as moral justification because it treats the person sacrificed as a resource to be optimized rather than an end in themselves. This is precisely the moral grammar that constitutional rights and legal protections against majoritarian overreach are designed to enforce: the recognition that there are things you may not do to a person, regardless of what the aggregate numbers say.

Bernard Williams, in his essays collected in Utilitarianism: For and Against, presses a different knife in: the integrity objection. If what I must do is always determined by impersonal welfare calculation—if every commitment, every relationship, every project I care about is in principle overridable when the numbers require it—then I have no moral space to be someone, to have commitments that function as genuine constraints on my action rather than considerations to be weighed and potentially discarded. A person who would abandon any commitment if the utilitarian calculus so demanded is not a person of integrity. And this is not merely an observation about psychology. It reflects something morally important: the agent matters, not merely as a site of welfare production, but as someone whose projects, relationships, and character have intrinsic moral significance. Consequentialism, by making every action a function of impersonal outcomes, systematically discounts this significance.

Finally, there is Nozick's utility monster, a thought experiment less elegant than the experience machine but more viscerally clarifying, potentially why I prefer it as my go-to example of the moral vacuity of consequentialist thinking. Imagine a being that derives such enormous utility from consuming resources (including other persons) that feeding it, however many people this harms, consistently maximizes aggregate welfare. Consistent consequentialism requires feeding the monster. The horror of this conclusion is not a limitation of the example; it is the point. It shows that the logic of welfare aggregation, pursued consistently, has no internal mechanism for protecting persons from being sacrificed to aggregate benefit. The only way to generate such protections within a consequentialist framework is to introduce constraints that are not themselves consequentialist, which is to concede that consequentialism, as a complete moral theory, is insufficient.

So, if default-utilitarianism is bankrupt and insufficient, how can it be countered if the reasons for adopting it are not strictly rational?

The Pluralism of Alternatives

With advocacy for none, a survey of meta-ethical options.

The purpose of these reductios is not to establish a single rival theory in place of folk consequentialism. The argument here is pluralistic: virtually any other systematic approach to ethics—including approaches that deny that moral claims have truth values at all—is preferable to folk consequentialism as a default moral posture.

Deontological ethics, in its Kantian form, begins from the dignity of persons as rational agents who are ends in themselves, never merely means. Rights, on Nozick's formulation, function as side-constraints: there are things you simply may not do to a person regardless of what the aggregate benefits would be. This is not mysticism. It is the recognition that persons have a kind of inviolability that is prior to any calculation of net benefit, that moral reasoning must have a grammar of no that does not depend on showing that the numbers come out differently. This maps naturally onto legal and constitutional traditions, in which the whole point of rights-based protections is precisely that they cannot be subjected to majoritarian cost-benefit analysis. The deontological vocabulary (duty, dignity, rights, inviolability) provides resources that folk consequentialism systematically lacks, and their absence from public moral reasoning has made that reasoning impoverished in ways that are practically consequential.

Virtue ethics offers a different corrective, one that operates at the level of character rather than rules or outcomes. The central question is not "what act produces the best result?" or "what rule must be followed?" but "what kind of person should I be?" and "what would a person of practical wisdom and good character do?" This is morally serious in ways that folk consequentialism is not, because it puts motive and character at the center of ethical evaluation. The person who does the right thing for purely calculating reasons—who, say, tells the truth because on reflection it maximizes welfare—is not fully virtuous by the lights of any moral tradition that takes character seriously. And our intuitions track this: we recognize a difference between a person who is honest because honesty is central to who they are and a person who is honest only when it pays. Virtue ethics is also more psychologically realistic than consequentialism. It offers an account of moral formation, of habituation and character development, of practical wisdom as a capacity that is cultivated over time rather than deployed on demand, an account that connects ethics to the actual texture of how human beings develop and sustain moral lives.

These two traditions (deontological and virtue-based) different as they are, share something important against consequentialism: a commitment to the moral significance of the agent. Both take seriously the idea that persons and their rights, their character, their integrity, matter morally in ways that cannot be cashed out as contributions to aggregate welfare. Both provide resources for saying that some things are wrong regardless of outcomes, and for articulating why. Together, they represent what a morally pluralistic public culture requires: multiple vocabularies for moral evaluation that can check and correct each other and that collectively resist the reduction of ethics to a single optimization procedure.

But there is a more surprising comparison to be made, one that illuminates the specific dishonesty of folk consequentialism. The emotivist and non-cognitivist theories in metaethics, e.g., A.J. Ayer's verificationist dismissal of moral claims as expressions of emotion rather than factual assertions, C.L. Stevenson's analysis of moral language as primarily persuasive rather than descriptive, R.M. Hare's prescriptivism, and the more sophisticated expressivism of Allan Gibbard and Simon Blackburn, are often thought of as deflationary or even nihilistic about ethics. They deny, in various ways, that moral claims have truth values, that there are moral facts, or that moral reasoning is genuinely cognitive. These are positions that many, including this writer, find ultimately untenable. But notice what these theories do not do: they do not pretend that moral conclusions are the outputs of a scientific procedure. Emotivism, in particular, is at least honest about what is happening when we make moral claims. When Ayer says that "cruelty is wrong" expresses an attitude rather than stating a fact, he is at minimum acknowledging that moral language operates differently from empirical description, that we are not simply reporting a natural property when we make an ethical judgment.

Folk consequentialism performs a more insidious maneuver. It claims to be doing empirical work—calculating welfare, measuring outcomes, optimizing for aggregate benefit—while smuggling in a foundational normative commitment (welfare is what we ought to maximize) that is no more scientifically grounded than any other moral axiom. It then presents its conclusions with the false authority of scientific objectivity, lending to what are ultimately contested moral judgments the rhetorical force of findings. The emotivist who says "I oppose this policy" is at least transparent about the nature of the claim. The folk consequentialist who says "this policy is supported by a cost-benefit analysis" has dressed the same claim in a costume it does not deserve.

The non-cognitivist, by refusing to reduce ethics to natural facts, paradoxically honors the life-world's irreducibility better than the theory that presents itself as the more rigorous alternative. Husserl's insight cuts here too: the emotivist who acknowledges that moral judgments are not descriptions of natural facts is closer to an accurate phenomenology of moral experience than the folk consequentialist who insists on treating them as optimization problems with measurable solutions.

Why This Matters

Default-utilitarianism's tentacles in policy debates.

The practical consequences of this philosophical confusion are not abstract. Default-utilitarianism has been the dominant moral idiom of American policy reasoning for most of the past century, and the results are visible in several characteristic patterns.

The most direct is what might be called the override: the use of aggregate welfare claims to justify policies that visibly harm identifiable individuals or groups. The post-September 11 architecture of mass surveillance is a clear case. The argument for it was never seriously contested on its own terms: security benefits to the many, diffuse and difficult to verify, were held to outweigh privacy costs to the many, concrete and demonstrable. The deontological objection—that persons have rights against monitoring that are not derived from any welfare calculus, that the state's authority to surveil its citizens is categorically constrained regardless of the aggregate benefits—was consistently treated as mere sentiment, as the kind of thing one says before getting serious. The numbers, in the event, were never rigorously established. The security benefits turned out to be, at best, marginal. But the consequentialist frame was never abandoned; its failure was treated as an empirical disappointment rather than as evidence that the frame itself was wrong.

Punitive carceral policy is a more structurally interesting case. Deterrence justifications for mass incarceration treat punishment as a welfare-maximizing instrument — a tool for producing outcomes (crime reduction) rather than as a morally appropriate response to wrongdoing. This is not merely a philosophical nicety. It is a corruption of the moral logic that gives punishment its only legitimate justification. The Hegelian desert-based account (that an offender ought to bear a proportionate consequence for their act, because this is what justice requires) treats the offender as a moral agent who is owed a proportionate response to what they have done. The deterrence account treats the offender as a resource: their suffering is instrumentalized to produce effects in others. The person being punished is used as a means to produce utility in the aggregate, in straightforward violation of the Kantian principle that persons may never be used merely as means. It is not possible to understand the scale and character of American mass incarceration without grasping how thoroughly the consequentialist frame displaced the desert frame in twentieth-century penology, and what followed from that displacement.

Technocratic paternalism—in both its libertarian-utilitarian form (maximize GDP, aggregate wealth, measurable preference satisfaction) and its progressive-utilitarian form (optimize welfare metrics, maximize measurable wellbeing across populations)—licenses overriding individual choice and community self-determination when the aggregate numbers support doing so. Both versions treat persons as sites of welfare production rather than as agents with intrinsic authority over their own lives. The individual who makes choices that reduce their measurable welfare is, on both accounts, a problem to be corrected rather than an agent to be respected. The community that organizes itself according to values that cannot be captured in welfare metrics (religious communities, traditional communities, communities that prioritize belonging over optimization) is treated as backward rather than as exercising a legitimate form of self-determination.

The deepest problem, however, is structural. Default-utilitarianism, because it lacks any internal mechanism for setting limits on what aggregate benefits can justify, functions as a nearly universal tool of motivated reasoning in policy argument. Any position can be defended by asserting—without rigorous demonstration—that it produces net benefit. Any opponent can be dismissed as letting sentiment obstruct the clear-eyed pursuit of good outcomes. The consequentialist frame, deployed rhetorically rather than rigorously, becomes an unfalsifiable justificatory machine: if the policy produces bad outcomes, that is an empirical disappointment requiring adjustment; it is never evidence that the framework itself is wrong or that the thing being pursued was never a legitimate target of optimization. A moral vocabulary that can justify almost anything while appearing to be the voice of science and reason is not a moral vocabulary at all. It is a rhetoric of moral authority, and a remarkably successful one.

Contra Default-Utilitarianism

The conclusion of this argument is not a triumphant alternative theory. Any honest conclusion must be pluralistic. Deontology provides indispensable resources for protecting persons against aggregate demands; virtue ethics provides indispensable resources for understanding moral character and motivation; even the emotivist's unflinching acknowledgment that moral claims are not empirical findings preserves something important about intellectual honesty in ethics. No single theory has yet proved adequate to the full range of moral experience, and the confidence with which folk consequentialism claims to have resolved what centuries of moral philosophy have not resolved should itself be a reason for suspicion.

What can be said is this: a genuine moral culture requires multiple vocabularies, id est, rights and duties, character and virtue, communal norms, honest acknowledgment of the non-algorithmic character of moral judgment. It requires the capacity to say, without flinching and without needing to run a calculation, that some things are simply wrong, that persons have dignity that cannot be traded against aggregate welfare, that obligations create constraints that do not dissolve when the numbers favor dissolving them, that what kind of people we are and what kind of community we sustain matter morally in ways that no welfare metric captures.

What default-utilitarianism has done is crowd out these vocabularies by presenting itself as the rigorous alternative to them. What you are left with once you strip away mysticism, sentiment, and pre-scientific moralizing. It has not replaced them. Dignity and desert and integrity have not stopped mattering to people because they cannot be quantified; they have simply been driven underground, where they continue to shape moral intuitions that have been denied the philosophical vocabulary they need to articulate themselves. The consequence is a public moral discourse that is simultaneously impoverished and dishonest: impoverished because it lacks the concepts necessary to say what people actually believe, dishonest because it presents contested moral commitments as scientific findings and disguises the normative axioms that do all the real work.

Husserl thought the crisis of the European sciences was a crisis of European humanity, a civilization that had forgotten, under the dazzle of its own scientific achievements, what questions it had originally set out to answer. The crisis of folk consequentialism is a smaller instance of the same forgetting: a culture that has mistaken its moral abstractions for its moral experience, its welfare calculus for its actual values, and the appearance of rigor for the substance of ethical seriousness. The recovery is not a matter of adopting the right theory; there are many alternatives, almost all of them superior. The recovery must be a gradual and definite shifting of these default perspectives for more introspective and thoughtful ones, and that can only arise when we elevate the discourse, discard the old language games, and embrace the possibility of greater understanding through epistemic and dialectical optimism about the narratives we tell ourselves.